NVIDIA MERLIN HUGECTR

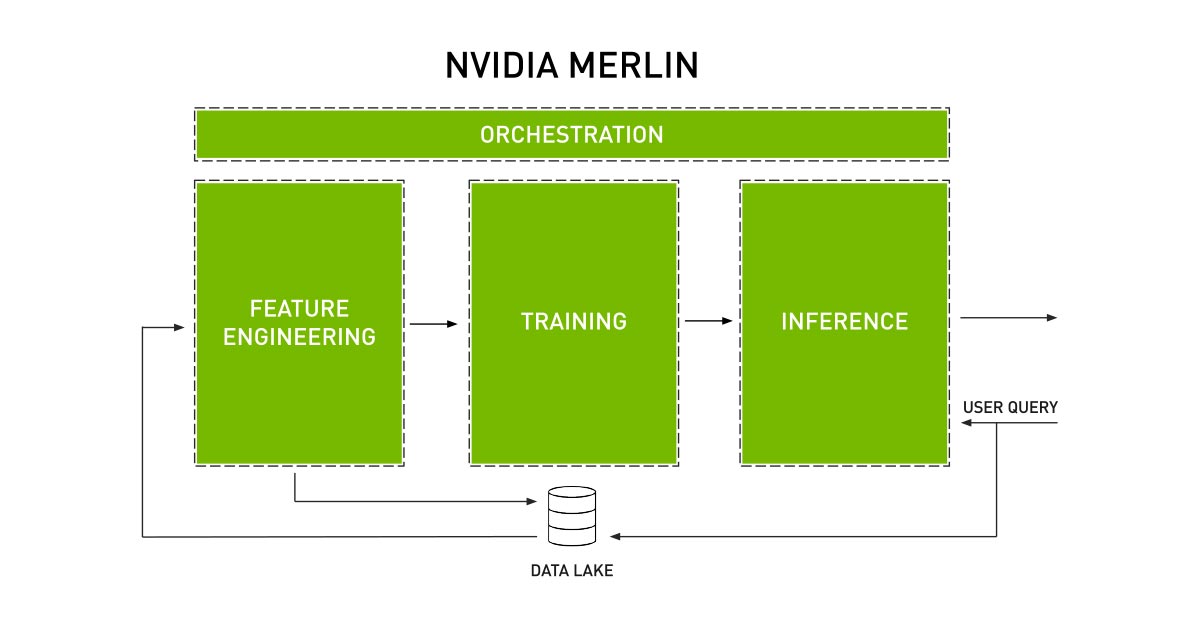

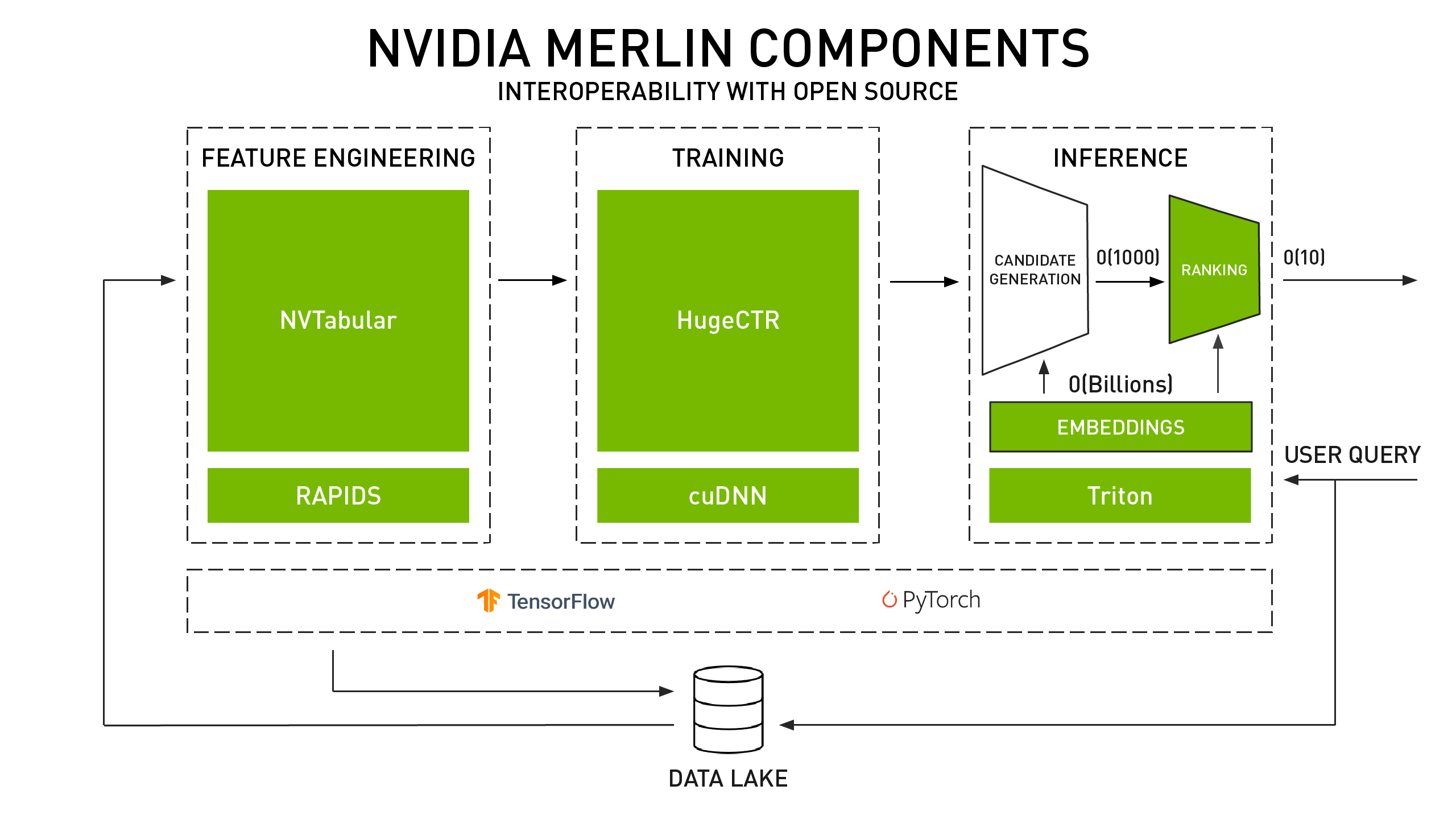

NVIDIA Merlin™ 能够加快从提取、训练到部署 GPU 加速型推荐系统的整个工作流。Merlin HugeCTR (Huge Click-Through-Rate) 是专为推荐系统设计的深度神经网络 (DNN) 训练框架。它提供了跨多个 GPU 和节点使用模型并行嵌入表、嵌入表缓存和数据并行神经网络进行分布式训练的功能,以便更大限度地提升性能。HugeCTR 涵盖了常见的新型架构,例如深度学习推荐模型 (DLRM)、Wide and Deep、Deep Cross Network (DCN) 和 DeepFM。

立即下载并试用

Merlin HugeCTR 核心特征

大规模训练嵌入表

构建深度学习推荐系统的数据科学家和机器学习工程师需要处理通常会超过可用内存的大型嵌入表。Merlin HugeCTR 的模型并行处理功能和嵌入表缓存是专为推荐系统工作流而设计的。这有助于轻松训练任意大小的嵌入表,并充分利用计算内存。HugeCTR 还利用 NVIDIA 集合通信库 (NCCL) 实现大规模的高速、多节点和多 GPU 通信。

了解详情本质上为异步多线程工作流

对于连续实验、训练和微调推荐系统模型的机器学习工程师和数据科学家来说,实现有效的数据加载是一项挑战。HugeCTR 的数据读取器本质上具有异步、多线程的特点。它将读取高维、稀疏或分类的批量数据记录。每条记录都会直接馈送至全连接层。HugeCTR 的嵌入层会将输入稀疏特征压缩为密集嵌入向量。HugeCTR 的模型并行处理功能使得在同构集群中跨多个节点和 GPU 进行嵌入式训练成为可能。

在 GitHub 上探索 HugeCTR多 GPU 上的推理、分层部署

HugeCTR 通过使用参数服务器以及在多个模型实例之间共享的嵌入表缓存,提供跨多个 GPU 的并发模型推理执行能力。HugeCTR 还利用 NVIDIA Triton™ 推理服务器简化相关工作流,以便数据科学家和机器学习工程师更轻松地将模型部署到生产环境中。

了解详情与开源组件的互操作性

机器学习工程师和数据科学家会混合使用多种方法、库、工具和框架(通常包含开源组件)。HugeCTR 是 NVIDIA Merlin 的开源组件,旨在优化推荐系统工作流中的嵌入表训练。HugeCTR 可与开源组件互操作,并包含一个支持使用 TensorFlow 进行稀疏训练和推理的开源 Python 包。

了解详情嵌入表优化

通过嵌入表优化,可进行更多实验、微调和更精准的大规模预测。与其他框架的嵌入层相比,HugeCTR 中经过优化的嵌入实现在性能方面要高出多达 8 倍。另外,这个经过优化的实现还可作为 TensorFlow 插件使用,能够与 TensorFlow 无缝配合,并作为 TensorFlow 原生嵌入层的便捷替代方案。

了解详情Merlin HugeCTR 入门

所有 NVIDIA Merlin 组件都已作为开源项目发布在 GitHub 上。不过,若要使用这些组件,更方便的方法是使用 NVIDIA NGC 目录中的 Merlin HugeCTR 容器。容器将软件应用、库、依赖项和运行时编译器打包在一个自包含环境中。这样一来,应用环境不仅可以实现可移植性、一致性、可重现性,而且可以独立于底层主机系统软件配置。

Merlin 推理

HugeCTR 支持 Triton 推理服务器,以提供 GPU 加速的推理功能。借助 NGC 容器,用户可以将 NVTabular 工作流和 HugeCTR 模型部署到 Triton 推理服务器,以用于生产。

GitHub 上的 HugeCTR

GitHub 资料库有助于用户开始使用 HugeCTR 以及使用 Python 接口快速训练模型。可用资源包括文档、教程、示例和 Notebook。

Merlin HugeCTR 资源

腾讯与 Merlin HugeCTR

了解腾讯如何使用 Merlin 部署其实际的广告推荐训练,并在同一 GPU 平台上实现比原始 TensorFlow 解决方案快 7 倍以上的速度。

Merlin 参考应用

开始使用开源参考实现,凭借高达 10 倍的加速,在公共数据集方面实现高度的推理准确性。

获取适用于 TENSORFLOW 的 WIDE AND DEEP

腾讯最佳实践

探索引导设计和开发腾讯深度学习推荐系统的见解、建议和最佳实践。

美团与 Merlin HugeCTR

了解美团如何通过构建部署在 CPU 和 GPU 集群上的高性能深度学习训练框架来优化其机器学习平台。